RabbitMQ Cluster with Consul and Vault

Almost two years ago I wrote an article about RabbitMQ clustering RabbitMQ in cluster. It was one of the first posts on my blog, and it’s really hard to believe it has been two years since I started this blog. Anyway, one of the questions about the topic described in the mentioned article inspired me to return to that subject one more time. That question pointed to the problem of an approach to setting up the cluster. This approach assumes that we are manually attaching new nodes to the cluster by executing the command rabbitmqctl join_cluster with cluster name as a parameter. If I remember correctly it was the only one available method of creating a cluster at that time. Today we have more choices, which illustrates an evolution of RabbitMQ during the last two years. RabbitMQ cluster can be formed in a number of ways:

- Manually with

rabbitmqctl(as described in my article RabbitMQ in cluster) - Declaratively by listing cluster nodes in config file

- Using DNS-based discovery

- Using AWS (EC2) instance discovery via a dedicated plugin

- Using Kubernetes discovery via a dedicated plugin

- Using Consul discovery via a dedicated plugin

- Using etcd-based discovery via a dedicated plugin

Today, I’m going to show you how to create RabbitMQ cluster using service discovery based on HashiCorp’s Consul. Additionally, we will include Vault to our architecture in order to use its interesting feature called secrets engine for managing credentials used for accessing RabbitMQ. We will set up this sample on the local machine using Docker images of RabbitMQ, Consul and Vault. Finally, we will test our solution using a simple Spring Boot application that sends and listens for incoming messages to the cluster. That application is available on GitHub repository sample-haclustered-rabbitmq-service in the branch consul.

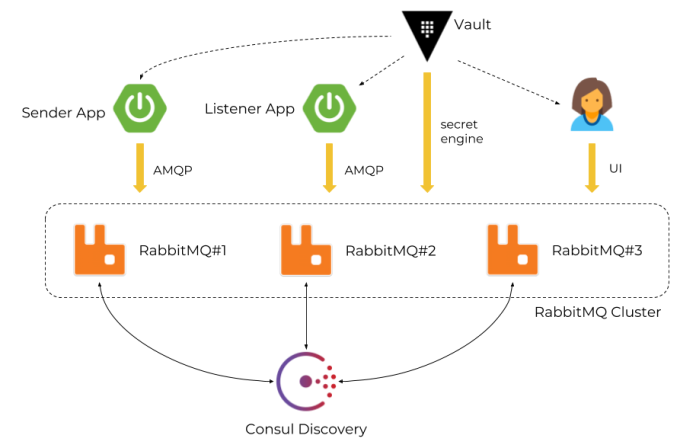

Architecture

We use Vault as a credentials manager when applications try to authenticate against RabbitMQ nodes or when a user tries to login to RabbitMQ web admin console. Each RabbitMQ node registers itself after startup in Consul and retrieves a list of nodes running inside a cluster. Vault is integrated with RabbitMQ using a dedicated secrets engine. Here’s an architecture of our sample solution.

1. Configure RabbitMQ Consul plugin

The integration between RabbitMQ and Consul is realized via plugin rabbitmq-peer-discovery-consul. This plugin is not enabled by default on the official RabbitMQ Docker container. So, the first step is to build our own Docker image based on the official RabbitMQ image that installs and enables the required plugin. By default, RabbitMQ main configuration file is available under path /etc/rabbitmq/rabbitmq.conf inside a Docker container. To override it we just use the COPY statement as shown below. The following Dockerfile definition takes RabbitMQ with a management web console as base image and enables rabbitmq_peer_discovery_consul plugin.

FROM rabbitmq:3.7.8-management

COPY rabbitmq.conf /etc/rabbitmq

RUN rabbitmq-plugins enable --offline rabbitmq_peer_discovery_consul

Now, let’s take a closer look on our plugin configuration settings. Because I run Docker on Windows Consul is not available under default localhost address, but on 192.168.99.100. So, first we need to set that IP address using property cluster_formation.consul.host. We also need to set Consul as a default peer discovery implementation by setting the name of plugin for property cluster_formation.peer_discovery_backend. Finally, we have to set two additional properties to make it work in our local Docker environment. It is related to the address of RabbitMQ node sent to Consul during the registration process. It is important to compute it properly, and not to send for example localhost. After setting property cluster_formation.consul.svc_addr_use_nodename to false node will register itself using host name instead of node name. We can set the name of the host for the container inside its running command. Here’s my full RabbitMQ configuration file used in the demo for this article.

loopback_users.guest = false

listeners.tcp.default = 5672

hipe_compile = false

management.listener.port = 15672

management.listener.ssl = false

cluster_formation.peer_discovery_backend = rabbit_peer_discovery_consul

cluster_formation.consul.host = 192.168.99.100

cluster_formation.consul.svc_addr_auto = true

cluster_formation.consul.svc_addr_use_nodename = false

After saving the configuration visible above in the file rabbitmq.conf we can proceed to building our custom Docker image with RabbitMQ. This image is available in my Docker repository under alias piomin/rabbitmq, but you can also build it by yourself from Dockerfile by executing the following command.

$ docker build -t piomin/rabbitmq:1.0 .

Sending build context to Docker daemon 3.072kB

Step 1 : FROM rabbitmq:3.7.8-management

---> d69a5113ceae

Step 2 : COPY rabbitmq.conf /etc/rabbitmq

---> aa306ef88085

Removing intermediate container fda0e21178f9

Step 3 : RUN rabbitmq-plugins enable --offline rabbitmq_peer_discovery_consul

---> Running in 0892a42bffef

The following plugins have been configured:

rabbitmq_management

rabbitmq_management_agent

rabbitmq_peer_discovery_common

rabbitmq_peer_discovery_consul

rabbitmq_web_dispatch

Applying plugin configuration to rabbit@fda0e21178f9...

The following plugins have been enabled:

rabbitmq_peer_discovery_common

rabbitmq_peer_discovery_consul

set 5 plugins.

Offline change; changes will take effect at broker restart.

---> cfe73f9d9904

Removing intermediate container 0892a42bffef

Successfully built cfe73f9d9904

2. Running RabbitMQ cluster on Docker

In the previous step we have succesfully created a Docker image of RabbitMQ configured to run in cluster mode using Consul discovery. Before running this image we need to start an instance of Consul. Here’s the command that starts the Docker container with Consul and exposes it on port 8500.

$ docker run -d --name consul -p 8500:8500 consul

We will also create a Docker network to enable communication between containers by hostname. It is required in this scenario, because each RabbitMQ container is registered using container hostname.

$ docker network create rabbitmq

Now, we can run our three clustered RabbitMQ containers. We will set a unique hostname for every single container (using -h option) and set the same Docker network everywhere. We also have to set the environment variable RABBITMQ_ERLANG_COOKIE.

$ docker run -d --name rabbit1 -h rabbit1 --network rabbitmq -p 30000:5672 -p 30010:15672 -e RABBITMQ_ERLANG_COOKIE='rabbitmq' piomin/rabbitmq:1.0

$ docker run -d --name rabbit2 -h rabbit2 --network rabbitmq -p 30001:5672 -p 30011:15672 -e RABBITMQ_ERLANG_COOKIE='rabbitmq' piomin/rabbitmq:1.0

$ docker run -d --name rabbit3 -h rabbit3 --network rabbitmq -p 30002:5672 -p 30012:15672 -e RABBITMQ_ERLANG_COOKIE='rabbitmq' piomin/rabbitmq:1.0

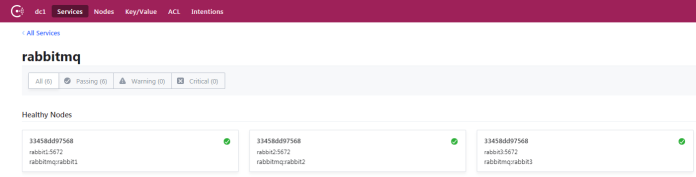

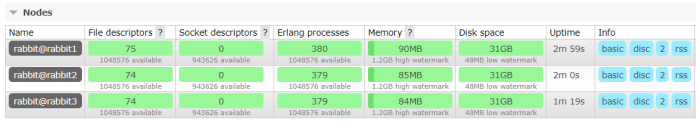

After running all three instances of RabbitMQ we can first take a look on the Consul web console. You should see there the new service called rabbitmq. This value is the default name of a cluster set by RabbitMQ Consul plugin. We can override inside rabbitmq.conf using cluster_formation.consul.svc property.

We can check out if the cluster has been succesfully started using RabbitMQ web management console. Every node is exposing it. I just had to override default port 15672 to avoid port conflicts between three running instances.

3. Integrating RabbitMQ with Vault

In the two previous steps we have succesfully run the cluster of three RabbitMQ nodes based on Consul discovery. Now, we will include Vault to our sample system to dynamically generate user credentials. Let’s begin from running Vault on Docker. You can find detailed information about it in my previous article Secure Spring Cloud Microservices with Vault and Nomad. We will run Vault in development mode using the following command.

$ docker run --cap-add=IPC_LOCK -d --name vault -p 8200:8200 vault

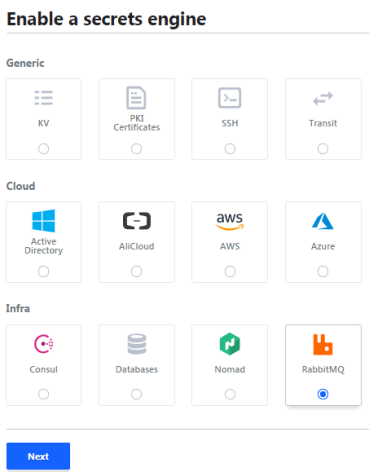

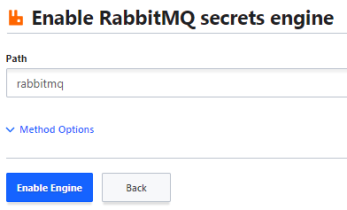

You can copy the root token from container logs using docker logs -f vault command. Then you have to login to the Vault web console available under address http://192.168.99.100:8200 using this token and enable RabbitMQ secret engine as shown below.

And confirm.

You can easily run Vault commands using a terminal provided by the web admin console or do the same thing using HTTP API. The first command visible below is used for writing connection details. We just need to pass RabbitMQ address and admin user credentials. The provided configuration settings points to #1 RabbitMQ node, but the changes are then replicated to the whole cluster.

$ vault write rabbitmq/config/connection connection_uri="http://192.168.99.100:30010" username="guest" password="guest"

The next step is to configure a role that maps a name in Vault to virtual host permissions.

$ vault write rabbitmq/roles/default vhosts='{"/":{"write": ".*", "read": ".*"}}'

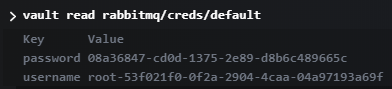

We can test our newly created configuration by running command vault read rabbitmq/creds/default as shown below.

4. Sample application

Our sample application is pretty simple. It consists of two modules. First of them sender is responsible for sending messages to RabbitMQ, while second listener for receiving incoming messages. Both of them are Spring Boot applications that integrate with RabbitMQ and Vault using the following dependencies.

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-amqp</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-vault-config-rabbitmq</artifactId>

<version>2.0.2.RELEASE</version>

</dependency>

We need to provide some configuration settings in bootstrap.yml file to integrate our application with Vault. First, we need to enable plugin for that integration by setting property spring.cloud.vault.rabbitmq.enabled to true. Of course, Vault address and root token are required. It is also important to set property spring.cloud.vault.rabbitmq.role with the name of Vault role configured in step 3. Spring Cloud Vault injects username and password generated by Vault to the application properties spring.rabbitmq.username and spring.rabbitmq.password, so the only thing we need to configure in bootstrap.yml file is the list of available cluster nodes.

spring:

rabbitmq:

addresses: 192.168.99.100:30000,192.168.99.100:30001,192.168.99.100:30002

cloud:

vault:

uri: http://192.168.99.100:8200

token: s.7DaENeiqLmsU5ZhEybBCRJhp

rabbitmq:

enabled: true

role: default

backend: rabbitmq

For the test purposes you should enable high-available queues on RabbitMQ. For instructions how to configure them using policies you can refer to my article RabbitMQ in cluster. The application works at the level of exchanges. Auto-configured connection factory is injected into the application and set for RabbitTemplate bean.

@SpringBootApplication

public class Sender {

private static final Logger LOGGER = LoggerFactory.getLogger("Sender");

@Autowired

RabbitTemplate template;

public static void main(String[] args) {

SpringApplication.run(Sender.class, args);

}

@PostConstruct

public void send() {

for (int i = 0; i < 1000; i++) {

int id = new Random().nextInt(100000);

template.convertAndSend(new Order(id, "TEST"+id, OrderType.values()[(id%2)]));

}

LOGGER.info("Sending completed.");

}

@Bean

public RabbitTemplate template(ConnectionFactory connectionFactory) {

RabbitTemplate rabbitTemplate = new RabbitTemplate(connectionFactory);

rabbitTemplate.setExchange("ex.example");

return rabbitTemplate;

}

}

Our listener app is connected only to the third node of the cluster (spring.rabbitmq.addresses=192.168.99.100:30002). However, the test queue is mirrored between all clustered nodes, so it is able to receive messages sent by sender app. You can easily test using my sample applications.

@SpringBootApplication

@EnableRabbit

public class Listener {

private static final Logger LOGGER = LoggerFactory.getLogger("Listener");

private Long timestamp;

public static void main(String[] args) {

SpringApplication.run(Listener.class, args);

}

@RabbitListener(queues = "q.example")

public void onMessage(Order order) {

if (timestamp == null)

timestamp = System.currentTimeMillis();

LOGGER.info((System.currentTimeMillis() - timestamp) + " : " + order.toString());

}

@Bean

public SimpleRabbitListenerContainerFactory rabbitListenerContainerFactory(ConnectionFactory connectionFactory) {

SimpleRabbitListenerContainerFactory factory = new SimpleRabbitListenerContainerFactory();

factory.setConnectionFactory(connectionFactory);

factory.setConcurrentConsumers(10);

factory.setMaxConcurrentConsumers(20);

return factory;

}

}

Related Posts